Every aspect of our lives, from life-saving medical advancements, transportation safety and security, to economic viability and even the convenience of selecting a movie, or a book, can be improved by creating better data analytics through Data Science.

Data is an ever-growing byproduct of our daily lives. An ever growing number of devices and processes collect and store bits of data by the second, from your phone and thermostat, to your car, to the roadway on which you drive, to all aspects of your daily life at work.

As we move from fragmentation and silos to and ever-connected and recorded future, data is becoming the new currency and a vital manufactured resource. The power, importance, and responsibility such incredible data stewardship will demand of us in the coming decades is hard to imagine and yet most will fail to fully appreciate the insights data can provide.

Businesses that do not rise to the occasion and garner insights from this new resource are destined for failure.

Opportunity Costs | Data Science is an emerging field, opportunity costs arise when a competitor implements and generates value from data before you. When competitors are able to successfully leverage Data Science to gain insights, they can drive differentiated customer value propositions and lead their industries as a result.

Abundance of Data | Huge amounts of data are being generated and stored every instant. Data Science can be used to transform data into intelligence that help improve existing processes. Operating costs can be driven down dramatically by effectively incorporating the complex interrelationships in data like never before.

Advancements in Technology and Algorithms | Processing capabilities by way of faster CPU and faster GPUS along with nearly weekly advancements in supporting libraries and algorithms make the development much faster and cheaper than ever before.

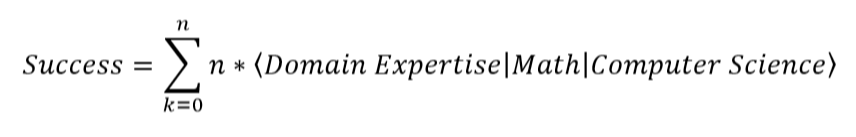

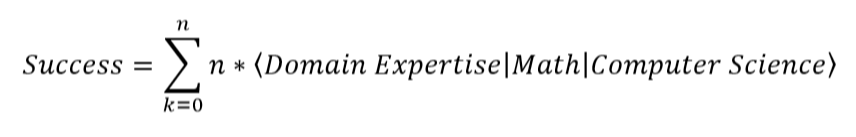

Truth be told, Data Science is a complex field. It is difficult, intellectually taxing work, which requires the sophisticated integration of talent, tools and techniques. To do this, we’ll transform the process into three simple domains with simplified activities, so that the application can be easily understood and executed.

Features | This domain focuses on preparing the data which you will feed into the learning models.

Models | This domain focuses on consuming the tensors and working through models, selecting the best without allowing perfection be the enemy of good. Perfection does not exist in machine learning.

Decisions | This domain focuses on the deployment and consumption of the final model.

information (at)

biteconomics (dot) com

Page was started with Mobirise website themes